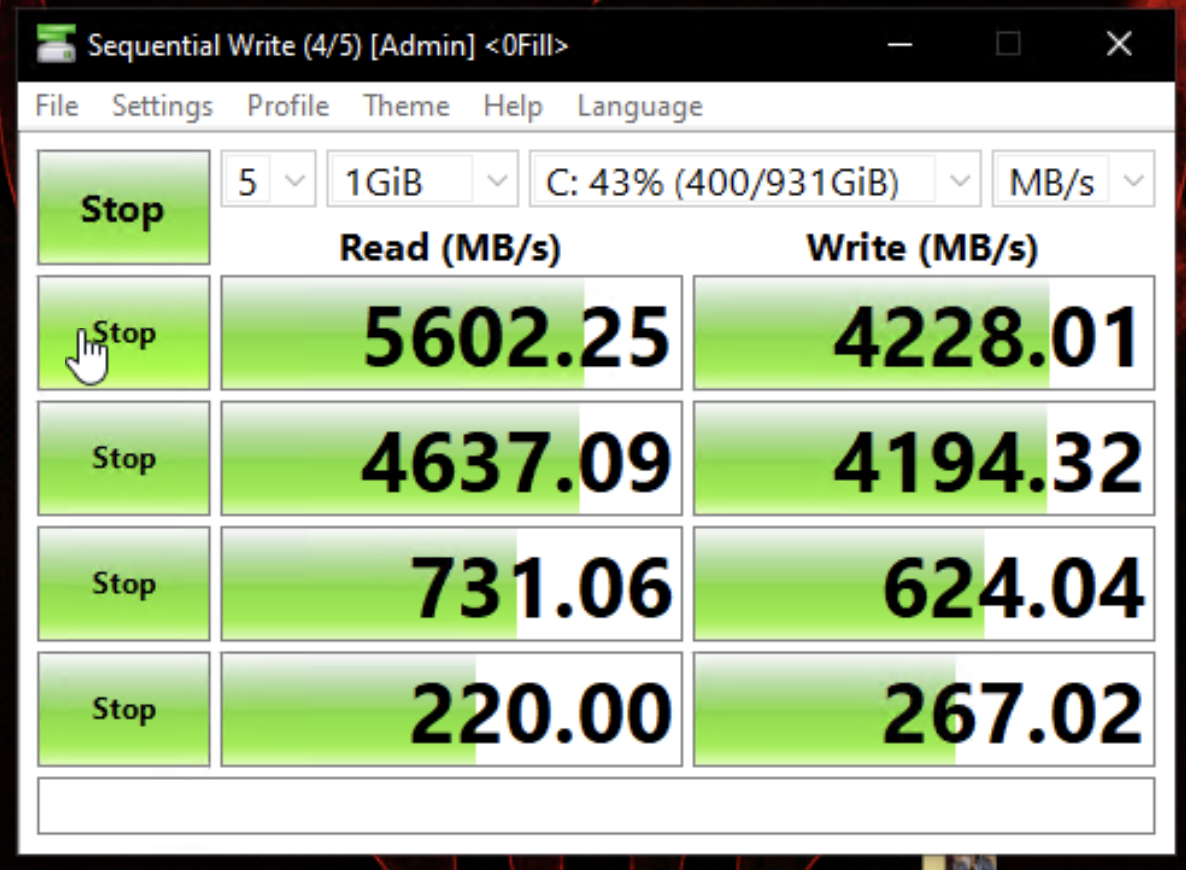

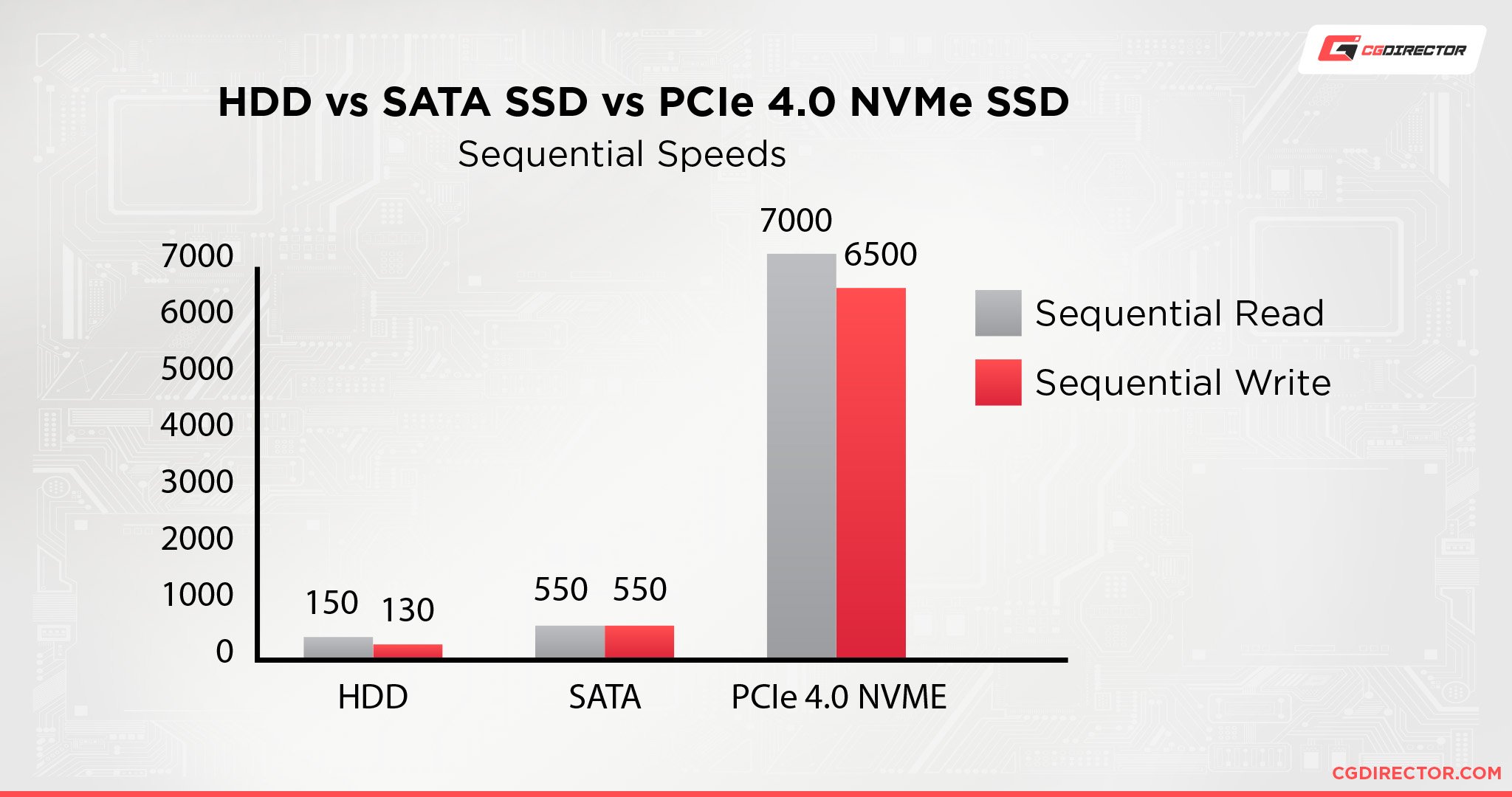

The zpool is configured via the following command: The NVME SSD is using XFS default settings is /etc/fstab Surely the 8 SSDs in the optimal random 4k performance will outperform a single Samsung 980. Create a ZFS pool of 4 mirrored vdevs in a single pool for Postgres (the goal of this server). I wanted to see if my idea actually was good. I am running Rock Linux 9.2 with kernel 5.14.0. “Great, lets try improving my free (company-wants-to-scrap-server) server with these bad boys.”īoot disk: Samsung 980 (the villain, a cheap 1TB cache-less NVME SSD)Ĩx Crucial MX300 500GB SSDs all connected to the DELL Perc H310 It just streams off the disk.I got my hands on eight 500GB Crucial MX300 SSDs for cheap. If you have a fast enough setup, like RAID-0 nVME disks, you can actually try completely disabling RAM preload. Well, some modern samplers will let you cut the RAM use down and up the number of samples streaming if you have faster disks. Also at a certain point the disk just maxes out and you drop samples no matter what. But the slower the disk the more RAM you have to dedicate to samples, which means the less you can load on a given system. This has been done for over a decade, since back in the HDD days. The solution to this is loading part of the samples in RAM, so you start streaming that while you wait for the disk to catch up. Pro soundcards can be set to extremely low latency, sometimes less than 1ms meaning if you do the data has to get prepped, processed, and out the door in short order. This is for music production and what you do is stream digital samples off the drive, and you can have thousands streaming at the same time. I can tell you one area that it does matter/is noticeable and that is Virtual Instruments. You just can't find that in a SATA SSD.įor gaming probably not. You now find 8TB second hand enterprise SSDs for under $500. Another benefit for NVME is what the actual manufacturers are making in big SSDs.

My 850 Pro will still deliver similar latency and response times to a 970 evo plus and WD SN750. If it didn't, I'd have to stop it before operations began and start all over again another weekend or what was most likely a long holiday weekend.įor desktop use, I'd say there's almost no difference day to day for gaming and general use. Some jobs I'd start Friday night and pray that it finished by late Sunday night. When you start moving around terabytes of data it saves days of wait time. Significant complexity was removed by consolidating hardware onto NVME storage.

Additional RAID sets for the OS, log, temp, page file. I used to have to run a physical server for each one, each server with a RAID 10 of SATA or SAS SSDs for each database. Example is our ERP system on the order of 2.5 TB SQL database x 3. A PC game can't make the same assumption.įor business, it's a massive improvement. I'll also note that console versions of a game can probably do more aggressive caching, since they can assume that nothing else is running and that the game can use essentially all of available memory. It's unlikely that those occasions comprise enough data to make sequential read speed differentials among SSD's apparent to the user. I'm sure there are occasions where it's likely that if you got chunk A, you'll need B and C as well. It's going to depend on the player path through the game. Caching stuff you won't need is a waste of time and space, and without clairvoyance, it's pretty hard to predict what will be needed in general even if it were known, it's not always possible to arrange that data needed sequentially by some particular gameplay runtime are stored sequentially in the file.

Even caching an entire 4+ gig phrase file might be an issue, given everything else that a game engine might want/need to keep in memory. Click to expand.Caches it where? You mentioned 24 and 40 gigabyte files, that's not going to work with a typical 16GB RAM desktop.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed